For reasons unknown to me, AMD decided this year to discontinue funding the effort

Presumably they did not want to see Cuda becoming the final de-facto standard that everyone uses. It nearly did at one point a couple of years ago, despite the lack of openness and lack of AMD hardware support.

i heavily rely on CUDA for many things i do on my personal computer. If this establishes itself as a reliable method to use all the funky CUDA stuff on AMD cards, my next card will 100% be AMD.

If there were a drop in equivalent to CUDA with AMD, I’d have several AMD cards, right now.

After two years of development and some deliberation, AMD decided that there is no business case for running CUDA applications on AMD GPUs. One of the terms of my contract with AMD was that if AMD did not find it fit for further development, I could release it. Which brings us to today.

Now let’s get this working on Nvidia hardware :P

as pointless as it sounds it would be a great way to test the system and call alternative implementations of each proprietary Nvidia library. It would also be great for debugging and development to provide an API for switching implementations at runtime.

Now we’ll see if Nvidia drops CUDA immediately, or waits until next quarter.

Do LLM or that AI image stuff run on CUDA?

Cuda is required to be able to interface with Nvidia GPUs. AI stuff almost always requires GPUs for the best performance.

Nearly all such software support CUDA, (which up to now was Nvidia only) and some also support AMD through ROCm, DirectML, ONNX, or some other means, but CUDA is most common. This will open up more of those to users with AMD hardware.

Thanks that is what I was curious about. So good news!

Yes, llama.cpp and derivates, stable diffusion, they also run on ROCm. LLM fine-tuning is CUDA as well, ROCm implementations not so much for this, but coming along.

They are usually released for CUDA first, and if the projects got popular enough, someone will come in and port them to other platforms, which can take a while especially for rocm. Apple m series ports usually appear first before rocm, that’s show how much the devs community dislike working with rocm with famous examples such as geohot throwing the towel after working with rocm for a while.

This is the best summary I could come up with:

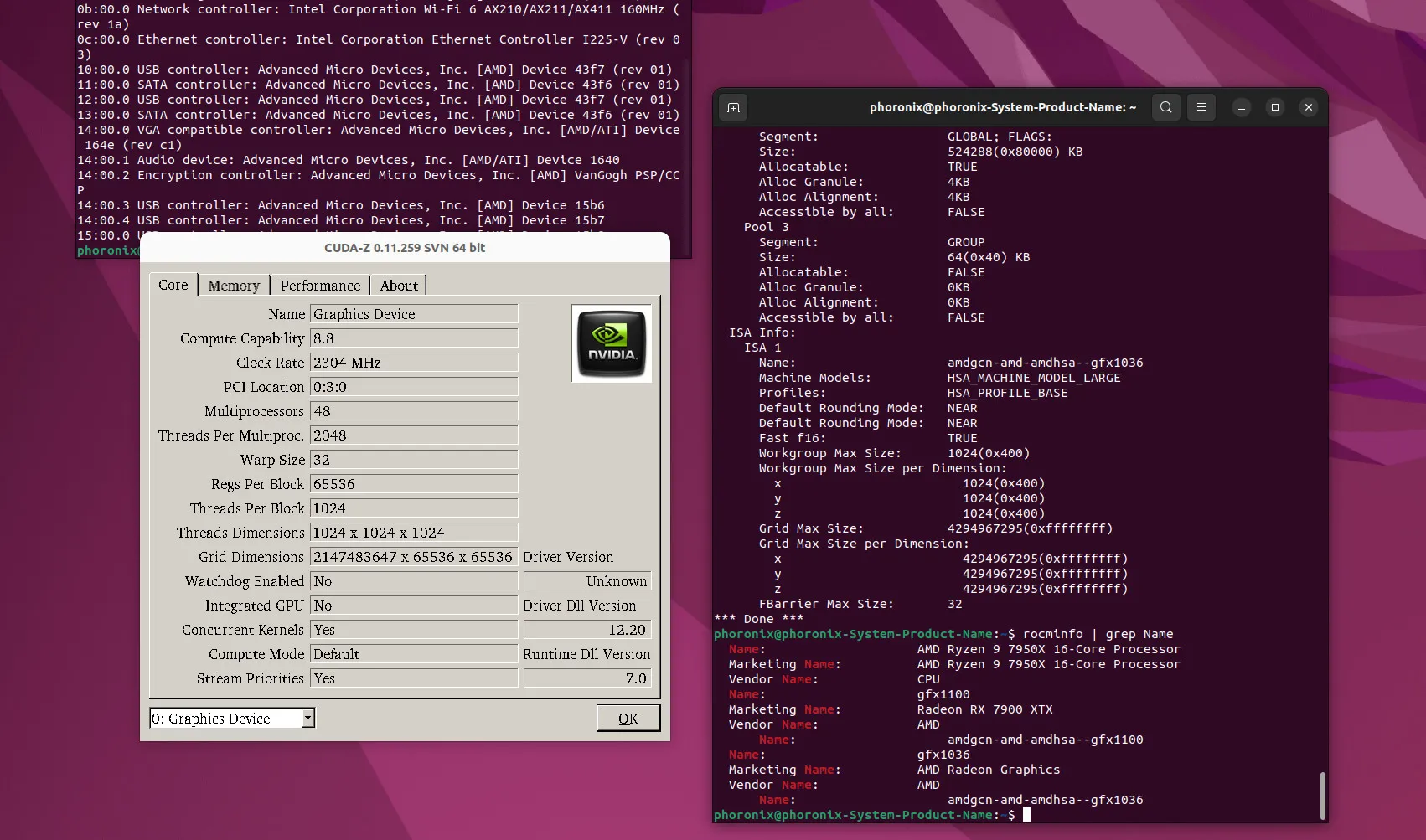

While there have been efforts by AMD over the years to make it easier to port codebases targeting NVIDIA’s CUDA API to run atop HIP/ROCm, it still requires work on the part of developers.

The tooling has improved such as with HIPIFY to help in auto-generating but it isn’t any simple, instant, and guaranteed solution – especially if striving for optimal performance.

In practice for many real-world workloads, it’s a solution for end-users to run CUDA-enabled software without any developer intervention.

Here is more information on this “skunkworks” project that is now available as open-source along with some of my own testing and performance benchmarks of this CUDA implementation built for Radeon GPUs.

For reasons unknown to me, AMD decided this year to discontinue funding the effort and not release it as any software product.

Andrzej Janik reached out and provided access to the new ZLUDA implementation for AMD ROCm to allow me to test it out and benchmark it in advance of today’s planned public announcement.

The original article contains 617 words, the summary contains 167 words. Saved 73%. I’m a bot and I’m open source!